SWaP changes image processing

StoryDecember 17, 2013

Situational awareness is essential for survival on the battlefield. But while satellites and large surveillance resources packed with sensors and signal processing hardware serve the needs of higher echelons, it has traditionally been difficult to get data to small detachments and individual soldiers within tactical timelines.

Situational awareness is essential for survival on the battlefield. But while satellites and large surveillance resources packed with sensors and signal processing hardware serve the needs of higher echelons, it has traditionally been difficult to get data to small detachments and individual soldiers within tactical timelines.

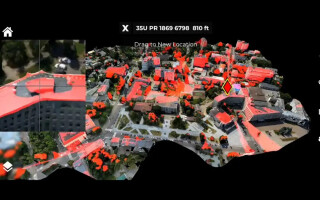

Demand at all levels, meanwhile, is accelerating for real-time video. A squad leader, soldier, or marine needs to see what’s happening over the next hill. They can’t wait for the intelligence to trickle down from higher headquarters. But even this localized information flow could swamp a viewer in the heat of battle, making it easy to miss a crucial detail. Thus, there is a premium on real-time image processing to speed detection and tracking so that soldiers can respond to threats in a timely manner.

The demand for real-time information at the tip of the spear has driven downsizing not only of the surveillance vehicles, sensors, motors, and gimbals, but also of image processing hardware (see sidebar). Instead of the typical trend towards ever more capability at ever increasing weight and cost, this sector has emphasized the opposite – just enough capability in the smallest possible package. As the unmanned tactical surveillance industry says – every ounce counts. Reduced Size, Weight, and Power (SWaP) mean longer dwell times, heightened situational awareness, and greater chance of survival.

Stabilization and tracking

In real-time video processing two key tasks are stabilization and tracking. Stabilization means removing the motion of the platform and the sensor from the video output, so that the viewer can focus on it without experiencing vertigo. This is important because the smaller the platform, the larger the bounce. Tracking means keeping the user-selected target in the center of the sensor’s field of view. Tracking requires sending steering commands – expressed in pixels or angles – to the gimbal, based on target positioning information extracted from the raw video data. Conducted in a closed loop between the computing resource and the sensor, the tracking function also filters the data via preprocessing algorithms to detect target edges and size in order to help the sensor stay locked on.

Sidebar 1

(Click graphic to zoom by 2.7x)

Downsizing

For many years stabilization and tracking functions required separate sets of hardware. Over the past 10 years, that hardware has shrunk dramatically. A typical cycle would be from a pair of 6U VME boards, to a pair of PC/104 boards, to a pair of chip-sized boards, and finally to a single small board or system-on-a-chip.

Even software can be tuned, engineered, and consolidated to maximize efficiency and minimize resources. Thus, the mathematical models on which the code is based, which describe the processes of stabilization and tracking, can be downsized to match the processor. When the models need to run on a smaller device, engineers can switch off expendable parts of them until they run reliably on a smaller chip. One example is the GE Intelligent Platforms ADEPT3100, a rugged, 0.9 inch by 1.3 inch, 1.5 W board using a standard commercial processor, which can simultaneously stabilize video and track a single target (see Figure 1). The 0.2 ounce device digitizes and processes standard-definition PAL or NTSC analog video signals, providing two serial lines for interfacing to external platforms.

Figure 1: The GE Intelligent Platforms ADEPT3100 is a rugged, 0.9 inch by 1.3 inch board that uses a standard commercial processor and can simultaneously stabilize video and track a single target.

(Click graphic to zoom by 1.9x)

Doing more with less

Over the years, military unmanned platforms, sensors, and gimbals have shrunk in size to match the real-time data demands of combatants in asymmetrical warfare. There is often no front line, so even the smallest units need some sort of organic intelligence capability in order to operate effectively. Image processing technology has kept up with this trend and has produced boards that do much more with much less than in the past.

defense.ge-ip.com