Full-motion video distribution for defense using open-source Secure Reliable Transport

StoryMay 04, 2020

ISO/IEC 13818 Part 1 (ITU-T Recommendation H.222.0) – released in 1995 – describes the synchronization of audio and video using a Transport Stream container structure (TS, MPEG2-TS, m2ts). The Transport Stream is unique in that it serves as a container for streaming video and as a container for static video files.

The Transport Stream container structure is highly extensible with support for synchronization of video, multiple audio tracks, and multiple data payloads. In 2006, when the Motion Imagery Standards Board (MISB) – tasked by the National Geospatial-Intelligence Agency (NGA) with making informed recommendations to the defense and intelligence community regarding video, imagery, and ancillary data – needed to select a standard container format for defense full-motion video (FMV), the Transport Stream was an obvious choice and continues to be the best available option.

The most widely adopted transport-layer protocol for carriage of Transport Stream payloads remains UDP or User Datagram Protocol. UDP has a number of key benefits in the national defense video context:

- UDP is a low latency transport-layer protocol in contrast to TCP

- Unidirectional transmission (via satellite, etc) is natively supported by UDP

- UDP requires minimal application overhead

- Point-to-multipoint communication is natively supported by the UDP Multicast address space (which facilitates a dominant amount of FMV delivery)

However, UDP also has inherent limitations that continue to trouble defense video distribution workflows:

- Designing and debugging the multicast routing infrastructure is a very advanced network engineering skillset

- Multicast is not supported by most of the VPN technologies that are heavily employed in secure remote contributor/receiver configurations. The workaround for multicast/VPN is tunneling, which entirely negates the intended efficiency of multicast.

- Network telemetry and/or video telemetry is not resident in the UDP protocol which makes root cause diagnosis of “bad video” or “no video” difficult in complex deployment topologies that use a combination of physical-layer transmission mediums (satellite, wireless, wired) and highly variable network configurations.

- The latest generation of video CODECs (H.265) is less resilient to the variance in time delay between data packets (jitter) to which UDP transmissions are most vulnerable.

SRT to handle low-latency video

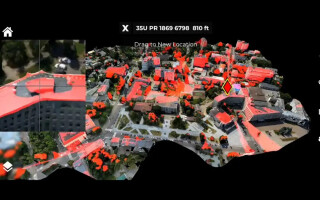

The Secure Reliable Transport Protocol (SRT), released as open source in April 2017, sought to address the challenges of live low-latency video transmission over unpredictable networks through the implementation of transport-layer mechanisms to enable packet-loss recovery and signal-timing reconstruction. (Figure 1.)

Figure 1 | The Secure Reliable Transport (SRT) protocol was designed to handle live low-latency video over unpredictable networks.

|

|

Traditional mechanisms used to address signal-timing corruption in transit (jitter) have previously been available in the form of network-layer buffers and application-layer buffers. Buffer implementations and configuration are application-specific and the manifestation of video degradation resulting from jitter is not uniform across applications. For example, the VLC application (a multimedia framework) responds to jitter by dropping frames, reducing perceived frame rate while maintaining frame integrity. The ffmpeg application responds to the same amount of jitter with video artifacts, maintaining real-time frame rate while reducing frame integrity. In both instances, the resultant video product is unusable for the purposes of processing, exploitation, and dissemination (PED). Since jitter compensation is native to the SRT protocol, tuning individual application buffers on a per-connection basis would not be required in the aforementioned example. (Figure 2.)

Figure 2 | H.265 encoded signal (720p30, 1 Mbps) delivered via RAW UDP (left) and SRT (right).

|

|

SRT is a substantially modified version of UDP-based Data Transfer (UDT) protocol. UDT was designed for high-throughput file transmission over unpredictable networks and aimed to maximize use of channel capacity. Real-time video packet generation is a function of configured video bit rate and is slow when compared to file-read speeds. For this reason, SRT implements changes to the buffer depletion and congestion control mechanisms in UDT and adds flow-control and payload-encryption mechanisms. From the perspective of a network administrator, an SRT data packet is a standard UDP packet with UDT header information contained inside the UDP data payload.

Simplified firewall traversal

The SRT connection employs a sender/receiver construct. (Figure 3.) The sender is designated as the SRT endpoint initiating the connection, not necessarily the endpoint that is originating the video stream. SRT connection setup implements Network Address Translation Traversal (NAT-T), a technique for establishing and maintaining IP connections across gateways (firewalls, for example) that implement NAT. Support for NAT-T enables SRT video consumption applications (VLC, for example) located behind a firewall to call out to SRT video producers like video encoders that are configured as a listener without the need for explicit allow UDP rules for ingress traffic on specified firewall ports. (Figure 4.)

Figure 3 | SRT packet structure diagram.]

|

|

Figure 4 | SRT caller/listener connection with firewall traversal.

|

|

SRT stream telemetry

By nature of a “connection,” network video administrators receive positive confirmation that nodes in the video distribution chain are communicating and derive connection health from SRT telemetry. This notice is crucial because diagnosis of outages and degradation in defense FMV distribution chains is often required following network security updates or network equipment replacement; pinpointing the culprit network segment, much less the culprit network device, in short order without any telemetry is a tradecraft known to few elite network video operators and vendors in the defense community. Distillation of the defense video distribution architecture into statistics-producing, connection-oriented communications between video senders and video receivers makes diagnostic tasks palatable to entry-level network operators.

Latency and flow control

The important distinction between SRT bidirectional connections and HTTP/TCP connections are latency and flow control. HTTP/TCP streaming implementations often observe as long as 30 seconds of end-to-end delay due to multiple processing steps and buffers along the signal path. Moreover, latency can vary with link conditions due to the TCP requirement that all bytes are completely delivered in order. HTTP/TCP does not allow video to skip over bad bytes; instead, the protocol will endlessly attempt to request missing data resulting in “rebuffering” during crowded network conditions. Video signals can survive a few lost bytes and SRT connections can correct or ignore (worst case) missing data in the interest of maintaining a target connection latency. SRT connection latency, while fixed, is adaptable to network conditions through explicit configuration that can be optimized from link telemetry.

SRT uses AES [Advanced Encryption Standard] in counter mode (AES-CTR) with a short-lived key to encrypt media streams. SRT encrypts the media stream at the transmission payload level (UDP payload of MPEG-TS/UDP encapsulation, which is about 7 MPEG-TS packets of 188 bytes each). A small packet header is required to keep the synchronization of the cipher counter between endpoints and no padding is required by counter-mode ciphers. Secure remote video contribution and video reception packages often rely on Virtual Private Network (VPN) connections to protect video streams in transit. In some instances, defense security policy dictates tunnel-in-tunnel for added information assurance. Traditional IPSec/ESP VPNs consume substantial overhead from padding and require GRE tunnels (added overhead) to enable the transmission of UDP/multicast. The AES-256 encryption engine in SRT offers a video-specific layer of security to existing secure deployments that does not affect stream efficiency with costly overhead.

Why use SRT?

It is very important to note that not all defense video transport infrastructure is challenged; there are many examples of well-resourced and robust architectures with capacity to spare and low rates of failure. The risk is that the insatiable appetite for video will continually add stress and complexity to our networks and that diagnosis of issues will cause lengthier outages, unnecessary equipment replacement, unwieldy email threads, more overtime, and similar problems.

SRT is a nonproprietary, API-based video stream delivery solution with strong vendor adoption and strong commercial end user adoption. More than 130 companies have endorsed the open source project by supporting the SRT Alliance, with users including such companies as Comcast, ESPN, Fox News, Microsoft, NBC Sports, and the NFL. Widely used free tools that provide the foundation for many national defense video applications – such as VideoLAN’s VLC, FFmpeg, GStreamer, and Wireshark – now support SRT. SRT presents an alternative to raw UDP that features native support for MPEG-TS, accurate signal timing, enhanced firewall traversal, and no requirement for a central server.

Note: The SRT protocol was initially developed by Haivision Systems Inc. and subsequently released as open source in April 2017. Open source SRT is distributed under Mozilla Public License 2.0. Any third party is free to use the SRT source in a larger work regardless of how that larger work is compiled.

Jack Welsh is a senior systems engineer supporting the Defense Applications Team at Haivision. Prior to joining Haivision in 2012, Jack served as engineering support to multiple U.S. Department of Defense Airborne ISR and IPSATCOM programs. Jack holds a bachelor’s in computer engineering from the University of Delaware. Readers may contact the author at jwelsh@haivision.com.

Haivision • www.haivision.com