Data-driven design for HMI development in avionics design

StoryOctober 11, 2017

Engineers and designers who work on glass cockpit displays continue to look for effective ways to interact with the inanimate objects they want to control. Using a data-driven approach similar to that used in video games, a structure can be created through which advanced human-machine interface (HMI) applications are deployed to meet the needs of avionics developers.

Data-driven models and model-based design are two terms that are cropping up more frequently in discussions among avionics engineers and designers, as well as in standards steering committees. All are focused on the most effective ways for humans to interact with the inanimate objects they wish to control. HMI can mean any method a human can use to interact with a device. Thus, a brake lever on a trolley car is a HMI device. For the purpose of this discussion, the definition of HMI will be limited to that of a pilot or an unmanned aerial system (UAS) ground station operator interacting with a glass display to effectively control and monitor an air vehicle.

The interaction between humans and aircraft systems requires complex actions and decision-making with split-second timing. For example, the space shuttle, with 3.5 million parts, used to be controlled by four or more astronauts, with a hierarchy of commander, pilot, and mission specialists. However, consider the F-22 Raptor fighter aircraft/weapons system: It has millions of parts and is heralded by many as one of the most complex systems developed by humans, yet it is controlled by a single individual – the pilot. It is important to note that this complex weapons system has glass multifunction displays (MFD) that control most of the systems’ functions.

There are many ways to create a graphical display. Software developers can use a graphics set of application programming interfaces (APIs), such as Open GL, or a myriad of tools that enable the developer to create interactive dynamic graphics to communicate with users needing to control systems via interactive glass displays. Many of the tools employ an integrated development environment (IDE) that stores the animated control graphics in a native format and then uses a code generator to create a source code file that can be compiled into an executable file. In some cases, the code generators used will optimize the native format file. The file generated is then compiled into an executable program, in many cases by an optimizing compiler that changes the executable even more. This would be a worst-case scenario in that most code generators have settings that allow the user to control the degree of optimization, which is also true of optimizing compilers.

The downside of this design approach is that it is often difficult, if not impossible, to baseline the ensuing code files and accurately track the effect of minor changes in these files. For example, if a simple shape is drawn in a frame and then is subsequently moved a few pixels to the left or right, that action could cause an optimizing code generator to create an entirely different output file, making that minor change impossible to baseline or track. The issue could be further exacerbated when the target display changes, which then calls for a change in the display layout, which needs to be redeveloped to accommodate the new target.

A data-driven approach

The gaming industry has long been faced with developing video games that need to run on a number of platforms. Faced with the number of game consoles that come and go, and the relatively short life cycle of many games the industry needed to develop a method that would let the game developers focus on the game play and environment of the game and not on constantly tweaking the game design to accommodate a given game console. The solution was to design to a gaming engine, for instance, to the “Unreal 4 engine.” Any game console that supported the Unreal 4 engine would then, by definition, support the original game design. Game designers could now focus on the game design and playability and not worry about the target gaming platform.

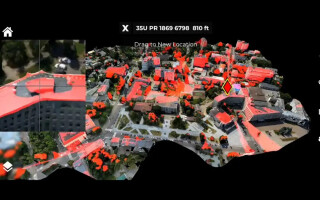

Suppose the same approach was used in the design of a glass HMI display. A graphics engine would sit on the target platform (embedded display system) and would process data to create a dynamic graphical display and its associated behavior. The HMI designer would focus on the look and feel of the display and not be concerned with the target system. In fact, that display could be used in an embedded cockpit, a flight simulator, or even a graphics tablet for training or marketing-related activities. The graphics engine would process a command stream downloaded to the target system as a file or array of data. Since it is pure data, there would be no need to compile or link it into an executable code base on the target system. The data would not change from display to display, thus creating a stable, consistent display system. Since the target-based engine is simply processing data, it would be a straightforward task to dynamically overlay this data with new data on the fly.

This approach means that the look and feel of the display could be altered while the target system is running and enables so-called man-in-the-loop HMI glass display design in real time. Stimulus and response times could be measured, altered, and evaluated in real time, saving many engineering design hours and rework.

A data-driven example

A good example of a data-driven architecture is the Aeronautical Radio, Inc. (ARINC) 661 specification, where the HMI is represented by a data format or model. In addition, the use case is very much like the gaming case described earlier, in that many different user applications (UA) can send commands to a common cockpit display system (CDS) and have those commands drive the CDS to communicate the status of the UA component, effectively providing control input to the UA. In theory, any UA written to the ARINC 661 specification can interface to an ARINC 661 CDS in much the same way that those earlier theoretical game developers write their game software to a gaming engine.

Figure 1: Diagram of an ARINC 661 system, in which a single cockpit display system (CDS) is communicating with many user applications (UAs).

However, that is where the similarity ends. In the gaming world, the software game is defined once to the engine and then is spawned to many game consoles for execution. The opposite is true in an ARINC 661 system: A single CDS is communicating with many UAs in virtually all aircraft systems. (Figure 1.) Look at it this way: A single CDS can be used as the pilot-aircraft interface. Since a single CDS is controlled by many UAs, a clear definition of the communication constructs is an essential component of the ARINC 661 definition. In addition, a UA can be communicating and controlling its data representation on several CDSs simultaneously. This methodology is being deployed on many aircraft, most notably the Boeing 787 Dreamliner.

The IData Tool Suite from ENSCO Avionics is one mechanism designers can use to create and deploy advanced HMI applications – for example for use in a cockpit display – within a data-driven, model-based development environment. The user has a single design to target multiple desktop or embedded systems, and support multiple stages of a product life cycle. Through data-driven development, the tool can help designers reduce the time required to create, test, and deploy HMIs. IData’s approach – which borrows from various industries that employ computer graphics – combines content-creation tools with a high-performance runtime engine processing an optimized data file.

Ray Niacaris has spent that last six years at ENSCO Avionics and the last 15 years working with HMI tools. He has more than 35 years of experience in real-time embedded systems and computer graphics. He holds degrees in electrical engineering and computer science from the Illinois Institute of Technology, has completed advanced studies in human factors and advanced product design, and has served on the faculty at the Illinois Institute of Design. Readers may reach Ray at niacaris.raymond@idatavs.com.

ENSCO, Inc. www.ensco.com